On this page

How Civo Kubernetes Routes Pod Traffic (Single Egress IP Explained)

Introduction

On Civo’s managed Kubernetes (built on K3s), worker nodes don’t get public IPs. Only the control plane does.

That means no matter which node your pod lands on, its outbound traffic leaves the cluster through a single public-facing IP. This is intentional. It simplifies networking, but it also changes how you think about egress, IP whitelisting, and external integrations.

Once you understand how Civo routes pod traffic internally, most “which IP do we whitelist?” confusion disappears.

In this post, we’ll cover:

- How Civo uses one IP for all pod egress

- What to whitelist when third parties require static IPs

- Edge cases to watch for in production

- How Civo Routes Pod Traffic to the Internet

Architecture: One Public IP Per Cluster

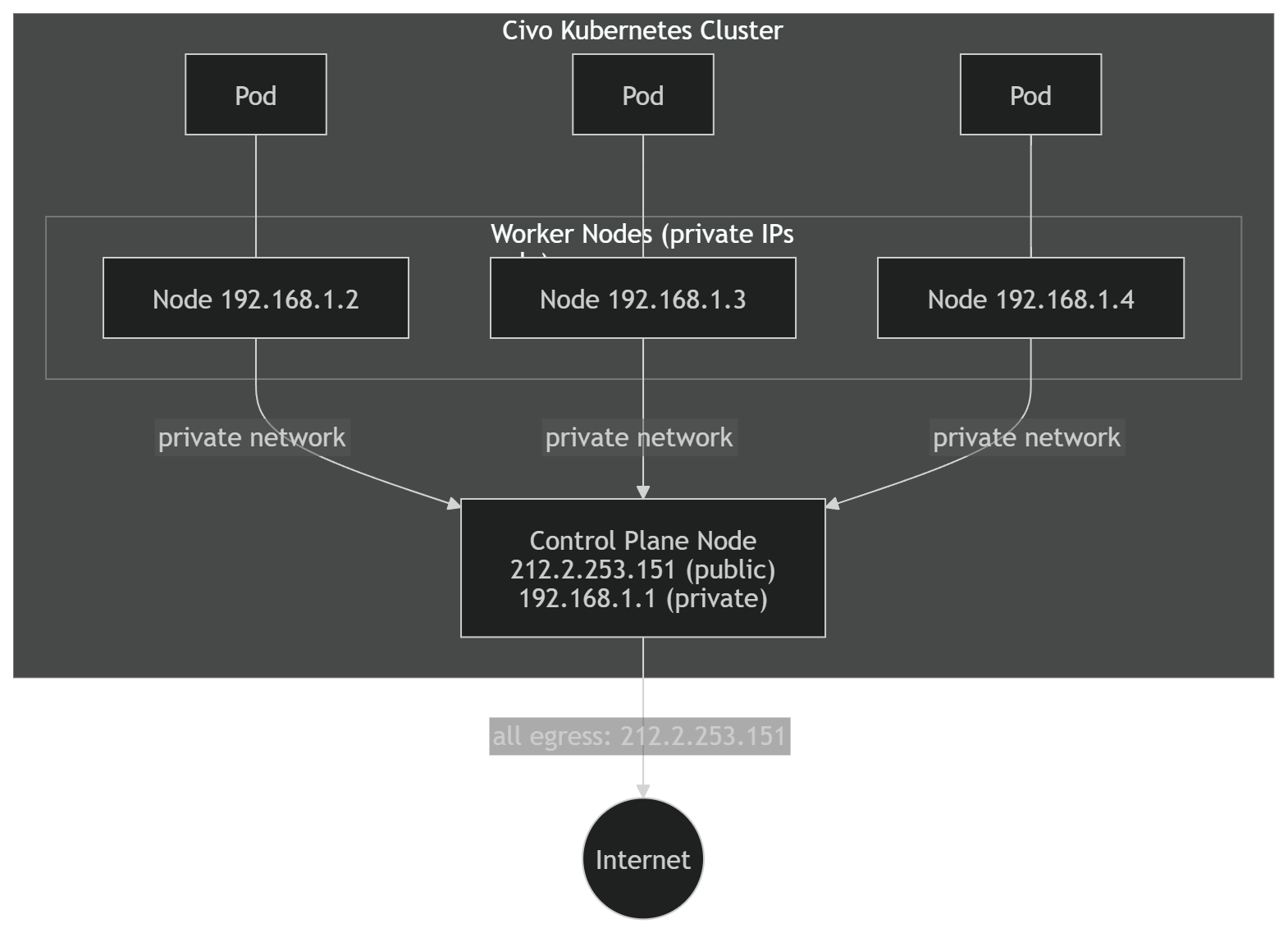

A standard Civo K3s cluster looks like this:

- Only the control plane node has a public IP

- Worker nodes only have private IPs (e.g.

192.168.1.x) - All outbound pod traffic is routed through the control plane

So every outbound connection from any pod appears to come from the same public IP.

From the internet’s perspective, the cluster has one identity.

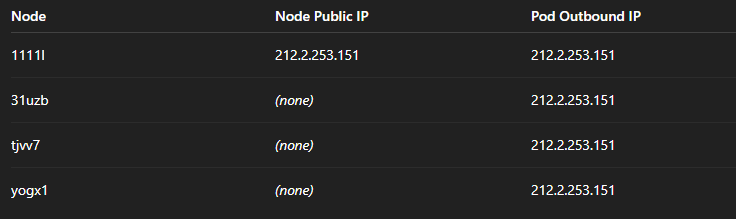

What We Observed in Testing

To verify this behavior, we deployed a DaemonSet running:

curl -s ifconfig.meon every node.

Even pods running on nodes without public IPs reported the same outbound IP — the control plane’s.

How It Works Under the Hood

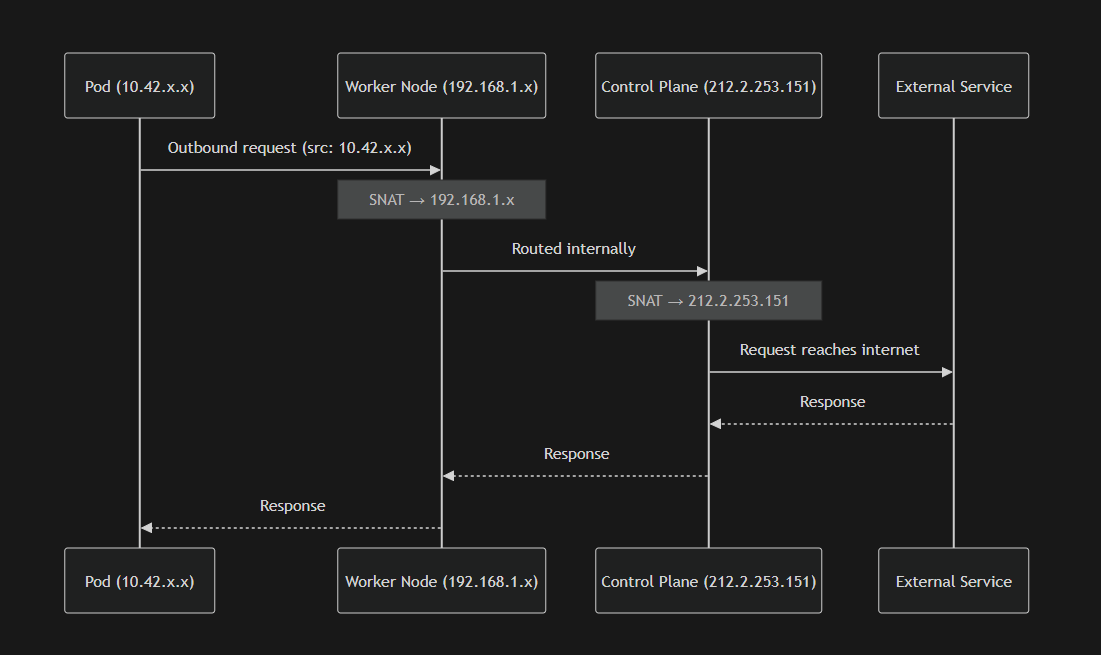

There are two NAT steps involved.

1. Pod Networking

Pods use private IPs assigned by the CNI (commonly Flannel in K3s), such as:

10.42.x.xThese IPs are internal to the cluster.

2. Node-Level Masquerading

When a pod makes an outbound request:

- Kubernetes performs SNAT

- The pod’s IP (

10.42.x.x) is translated to the worker node’s private IP (192.168.1.x)

This is standard Kubernetes behavior.

3. Control Plane Egress

Because worker nodes do not have public IPs:

- Traffic is routed over Civo’s internal network

- The control plane receives it

- The control plane performs another SNAT

- The source IP becomes the control plane’s public IP

- The packet exits to the internet

The result is simple:

One predictable egress IP for the entire cluster.

IP Whitelisting Scenarios

What to Whitelist (Outbound Traffic)

If an external system restricts access by source IP — for example:

- APIs

- Databases

- Webhook endpoints

- SaaS services

You should whitelist:

The control plane’s public IP.

Retrieve it with:

civo kubernetes show <cluster-name>or:

kubectl config view --minify -o jsonpath='{.clusters[0].cluster.server}'That’s your cluster’s egress identity.

What Not to Whitelist

Do not whitelist:

- Pod IPs

- Worker private IPs

- LoadBalancer IPs (for outbound cases)

- Reserved IPs

Those are not used for default pod egress.

Inbound vs Outbound Clarification

- If your pods call an external system → whitelist the control plane IP

- If an external system calls your cluster → whitelist the LoadBalancer or Ingress IP

Edge Cases

Control Plane Replacement

If the control plane node is upgraded, recreated, or fails, the public IP may change.

Impact:

- Existing whitelists may break

- Outbound requests may be rejected

Verify egress IP after cluster-level changes:

kubectl run ip-check --rm -it \

--image=curlimages/curl \

--restart=Never -- curl -s ifconfig.meMultiple Clusters

Each cluster has its own control plane and therefore its own egress IP.

If you run dev, staging, and production clusters, each requires separate whitelist entries.

LoadBalancer vs Cluster Egress

LoadBalancer services get their own public IPs.

Those are for inbound traffic only and do not affect pod egress.

Summary

On Civo Kubernetes, all pod traffic to the internet exits through a single public IP — the control plane’s IP.

That’s the IP to use for outbound whitelisting.

LoadBalancer IPs are for inbound traffic only.

The design is simple and predictable once you understand that the control plane acts as the cluster’s egress gateway.