On this page

How to Avoid GitHub Token Rate Limiting Issues | Complete Guide for DevOps Teams

Fix GitHub API rate limit errors in CI/CD pipelines. Learn how to avoid 403 errors using tokens, caching, and GitHub Apps with real DevOps solutions.

Introduction

Your CI/CD pipeline was working fine until suddenly every build started failing with a GitHub API 403 error.

I faced this exact issue during a production deployment. Everything looked fine—no code changes, no infrastructure issues—but pipelines kept failing.

At first, we thought it was a bug in the pipeline, but nothing pointed to the actual issue.

After debugging for hours, it became clear that the problem was not the code, but GitHub API rate limiting.

If you are facing GitHub API rate limit exceeded errors in CI/CD pipelines, this guide will help you fix them effectively.

Quick Fix (TL;DR for busy DevOps engineers): Use authenticated tokens, reduce unnecessary API calls, implement caching, and switch to GitHub Apps for scalable systems.

What is GitHub API Rate Limiting?

GitHub API rate limiting restricts how many API requests you can make within a specific time window. This ensures fair usage and prevents abuse.

In real-world DevOps workflows, this limit can quickly become a bottleneck.

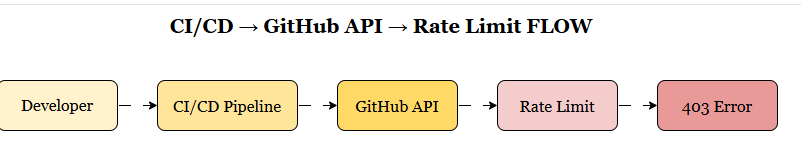

Why GitHub API Rate Limit Errors Happen in CI/CD

In most DevOps setups, pipelines frequently interact with GitHub APIs:

- Fetching repositories

- Triggering workflows

- Checking build statuses

- Managing pull requests

Common causes include:

- Frequent API polling

- Multiple services making requests at the same time

- Unauthenticated API usage

This is where GitHub API rate limit exceeded errors typically occur.

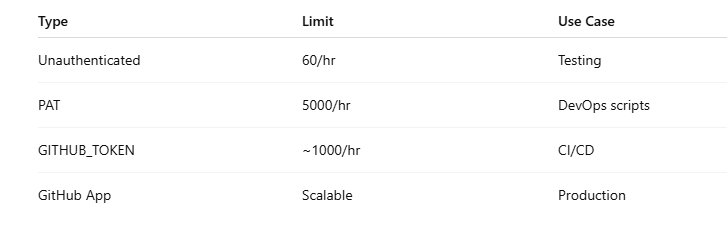

GitHub API Rate Limits Explained (Token Types)

- Unauthenticated Requests: 60 requests per hour

- Personal Access Token (PAT): 5000 requests per hour

- GitHub Actions Token: approximately 1000 requests per hour

Using authenticated requests significantly increases your available limits.

Token Management Strategies

Use GitHub Apps Instead of Personal Tokens

GitHub Apps provide higher rate limits and better security.

# .github/workflows/deploy.yml

name: Deploy with GitHub App

on:

push:

branches: [main]

jobs:

deploy:

runs-on: ubuntu-latest

steps:

- name: Checkout

uses: actions/checkout@v4

with:

token: ${{ secrets.GITHUB_APP_TOKEN }}

- name: Deploy

run: |

# Your deployment logic hereImplement Token Rotation

Rotate tokens regularly to avoid hitting limits:

#!/bin/bash

# token-rotation.sh

OLD_TOKEN=$1

NEW_TOKEN=$2

# Update secrets in repository

gh secret set GITHUB_TOKEN --body "$NEW_TOKEN"Use Repository-Specific Tokens

# Different tokens for different purposes

env:

DEPLOY_TOKEN: ${{ secrets.DEPLOY_TOKEN }}

NOTIFY_TOKEN: ${{ secrets.NOTIFY_TOKEN }}

BACKUP_TOKEN: ${{ secrets.BACKUP_TOKEN }}Rate Limit Handling in Code

Implement Exponential Backoff

This function retries API calls when rate limits are hit.

import time

import random

from functools import wraps

def retry_with_backoff(max_retries=3, base_delay=1):

def decorator(func):

@wraps(func)

def wrapper(*args, **kwargs):

for attempt in range(max_retries):

try:

return func(*args, **kwargs)

except Exception as e:

if "rate limit" in str(e).lower() and attempt < max_retries - 1:

delay = base_delay * (2 ** attempt) + random.uniform(0, 1)

time.sleep(delay)

continue

raise

return None

return wrapper

return decorator

@retry_with_backoff(max_retries=5, base_delay=2)

def make_github_request(url, headers):

response = requests.get(url, headers=headers)

if response.status_code == 429:

retry_after = int(response.headers.get('Retry-After', 60))

time.sleep(retry_after)

raise Exception("Rate limited")

return responseCheck Rate Limit Headers

Always monitor rate limit headers in your requests:

def check_rate_limit(response):

remaining = int(response.headers.get('X-RateLimit-Remaining', 0))

reset_time = int(response.headers.get('X-RateLimit-Reset', 0))

if remaining < 100: # Warning threshold

print(f"Warning: Only {remaining} requests remaining")

print(f"Rate limit resets at: {reset_time}")

return remaining, reset_timeGitHub API Rate Limit Architecture (CI/CD Flow)

CI/CD Pipeline Optimization

Batch API Requests

Combine multiple operations into single requests:

# Instead of multiple individual requests

- name: Get PR details

run: |

# Bad: Multiple API calls

gh pr view ${{ github.event.pull_request.number }} --json title

gh pr view ${{ github.event.pull_request.number }} --json body

gh pr view ${{ github.event.pull_request.number }} --json files

# Good: Single API call

gh pr view ${{ github.event.pull_request.number }} --json title,body,filesCache API Responses

Use GitHub Actions cache to reduce API calls:

- name: Cache API response

uses: actions/cache@v3

with:

path: ~/.cache/github-api

key: ${{ runner.os }}-api-cache-${{ github.sha }}

restore-keys: |

${{ runner.os }}-api-cache-

- name: Use cached data

run: |

if [ -f ~/.cache/github-api/data.json ]; then

echo "Using cached data"

else

echo "Fetching fresh data"

gh api repos/${{ github.repository }}/commits > ~/.cache/github-api/data.json

fiOptimize Workflow Triggers

Reduce unnecessary workflow runs:

# Only run on specific paths

on:

push:

branches: [main]

paths:

- 'src/**'

- 'package.json'

- '.github/workflows/**'

# Skip workflows for draft PRs

- name: Skip for draft PRs

if: github.event.pull_request.draft == true

run: exit 0Monitoring and Alerting

Set Up Rate Limit Monitoring

- name: Monitor rate limits

run: |

response=$(gh api rate_limit)

remaining=$(echo $response | jq '.rate.remaining')

if [ $remaining -lt 100 ]; then

echo "::warning::Rate limit low: $remaining requests remaining"

fiCreate Rate Limit Dashboard

import requests

import json

from datetime import datetime

def monitor_rate_limits(token):

headers = {'Authorization': f'token {token}'}

response = requests.get('https://api.github.com/rate_limit', headers=headers)

data = response.json()

rate = data['rate']

print(f"Remaining: {rate['remaining']}")

print(f"Reset time: {datetime.fromtimestamp(rate['reset'])}")

if rate['remaining'] < 100:

# Send alert to Slack/Teams

send_alert(f"GitHub rate limit low: {rate['remaining']} remaining")GitHub Token Types Comparison

Choosing the right authentication method directly impacts pipeline stability.

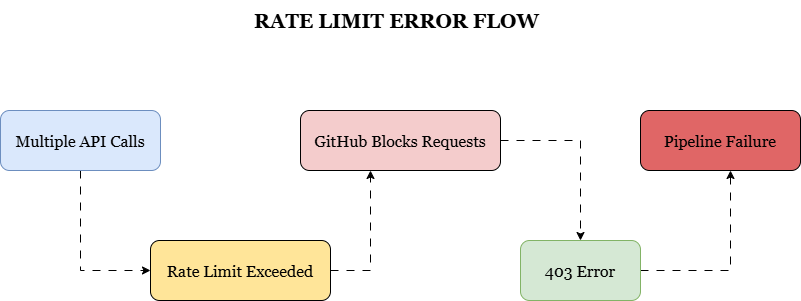

What Happens When Rate Limit is Exceeded?

Best Practices Summary

- Use GitHub Apps for higher rate limits

- Implement exponential backoff

- Monitor API headers

- Cache API responses

- Batch API requests

- Optimize workflows

- Rotate tokens regularly

Read More

If you are working with cloud and DevOps setups, these guides may help:

- https://www.kubeblogs.com/k3s-vs-kubernetes/

- https://www.kubeblogs.com/aws-t2-vs-t3-vs-t4g/

- https://www.kubeblogs.com/aws-gp2-vs-gp3/

- https://www.kubeblogs.com/s3-security-best-practices/

FAQ: GitHub API Rate Limit Explained

What is GitHub API rate limiting?

GitHub API rate limiting restricts how many API requests you can make within a defined time window.

Why do I get a 403 error in GitHub API?

You get a 403 error when you exceed your API rate limit or use unauthenticated requests.

How can I fix GitHub API rate limit exceeded errors?

Use authenticated tokens, reduce unnecessary API calls, and implement caching strategies.

Can GitHub Actions hit rate limits?

Yes, GitHub Actions can hit rate limits, especially in workflows that make frequent API calls.

Conclusion

GitHub API rate limiting is a common issue in DevOps workflows, but it is predictable once you understand how it works.

By using authenticated tokens, reducing API calls, implementing caching, and leveraging GitHub Apps, you can prevent unexpected failures in your CI/CD pipelines.

Handling API limits properly is essential for building stable and scalable automation systems.